The proliferation of generative AI has created a problem that law enforcement can no longer ignore: AI-manipulated content is fundamentally undermining how we verify evidence authenticity. This is not a theoretical risk we need to plan for. It is happening now, in courtrooms, and it is forcing us to rethink decades of established practice.

The Deepfake Precedent That Changed Everything

In September 2025, an Alameda County judge dismissed a case and sanctioned the plaintiffs for intentionally submitting false evidence. This was not just another courtroom dispute. It marked one of the first documented instances where deliberate deepfake deployment made it into civil legal proceedings.

The plaintiffs submitted multiple videos, photographs, and messaging screenshots presented as witness testimony or admissions. Upon review, the court determined that several exhibits were deepfakes or otherwise altered using generative AI, including video testimonials purporting to show statements of a key witness and photographs altered to insert individuals into camera footage.

The judge concluded that the use of deepfakes "fundamentally undermines the integrity of judicial proceedings" and imposed the most severe remedy available: dismissal with prejudice. The court stated it had zero tolerance for AI-generated falsifications presented as authentic evidence.

Case dismissed with prejudice. Terminating sanctions imposed. The court noted it lacked "the time, funding, or technical expertise" to assess whether additional exhibits had also been altered.

The implications are stark. We can no longer presume that digital recordings or video evidence are authentic. That longstanding assumption, the one that has underpinned countless investigations and prosecutions, has been fundamentally challenged. When content can be convincingly generated or manipulated by AI, traditional indicators of authenticity become unreliable.

The Vulnerabilities in Current Evidence Infrastructure

What concerns me most is this: law enforcement agencies are deploying evidence management systems without fully grasping AI's potential impact on data integrity. Yes, AI tools offer valuable capabilities for anomaly detection and analysis. But they also introduce transformation risks that have not been adequately examined.

The technical architecture creates inherent problems. AI analysis requires data decryption before processing. That decryption creates exposure windows where data sits unprotected in cloud environments, vulnerable to manipulation or unauthorized access by AI systems or cloud service providers.

Current detection methods are not solving this either. Technologies designed to identify AI-generated content have demonstrated inconsistent reliability and systematic bias. Human evaluation is equally inadequate for distinguishing authentic digital artifacts from AI-generated counterfeits.

No existing methodology can definitively classify whether text, audio, video, or images are authentic versus AI-generated, particularly after they leave a controlled environment.

What Vendors Need to Tell You

If you are evaluating evidence management systems, you need to ask harder questions. Vendor accountability requires transparent disclosure about AI implementation and its implications for chain of custody integrity and data security. You should be demanding answers to:

- ?How do their AI tools access cloud-based data, and under what conditions is source evidence decrypted?

- ?What happens to source data security when AI processing creates cloud provider exposure?

- ?What vulnerabilities are introduced through decryption requirements in the evidence chain?

- ?How does their system maintain integrity when files must be decrypted for analysis?

- ?Can they provide cryptographic proof that original evidence remains unaltered after AI processing?

Traditional authentication frameworks under the US Federal Rule of Evidence 901(b), including eyewitness testimony and metadata verification, are not sophisticated enough to reliably detect advanced AI-generated deepfakes. We are using decades-old authentication methods for challenges that did not exist when those methods were developed.

The Real-World Impact on Investigations

Law enforcement agencies face an escalating challenge: maintaining investigation effectiveness while digital evidence authenticity becomes increasingly difficult to verify with certainty. Modern criminal investigations prioritize digital evidence — emails, text messages, phone records, video footage, social media content, cloud-stored information. In many cases, we value it above physical evidence. And now this critical evidence category faces systematic vulnerability to AI manipulation.

The AI doubt effect

In a 2023 lawsuit involving a Tesla crash, defense counsel challenged video evidence authenticity by simply suggesting it could be a deepfake. They did not need technical proof. Just introducing AI generation as plausible was enough to undermine the evidence's credibility. Jurors are overestimating the probability that evidence has been fabricated. It is similar to the CSI effect, but potentially more damaging because it undermines confidence in evidence itself.

Why Traditional Chain of Custody Is Not Enough Anymore

Conventional chain-of-custody logging provides insufficient protection against AI-era integrity challenges. The Alameda County case demonstrates what happens when evidence systems cannot definitively prove authenticity. Judges dismiss cases and sanction parties when doubt exists about evidence integrity.

Provable integrity requires robust zero-knowledge architecture combined with comprehensive encryption and cryptographic digital signatures. Digital signatures provide mathematical proof that files remain unaltered from creation, establishing verifiable, tamper-evident seals that can withstand judicial scrutiny.

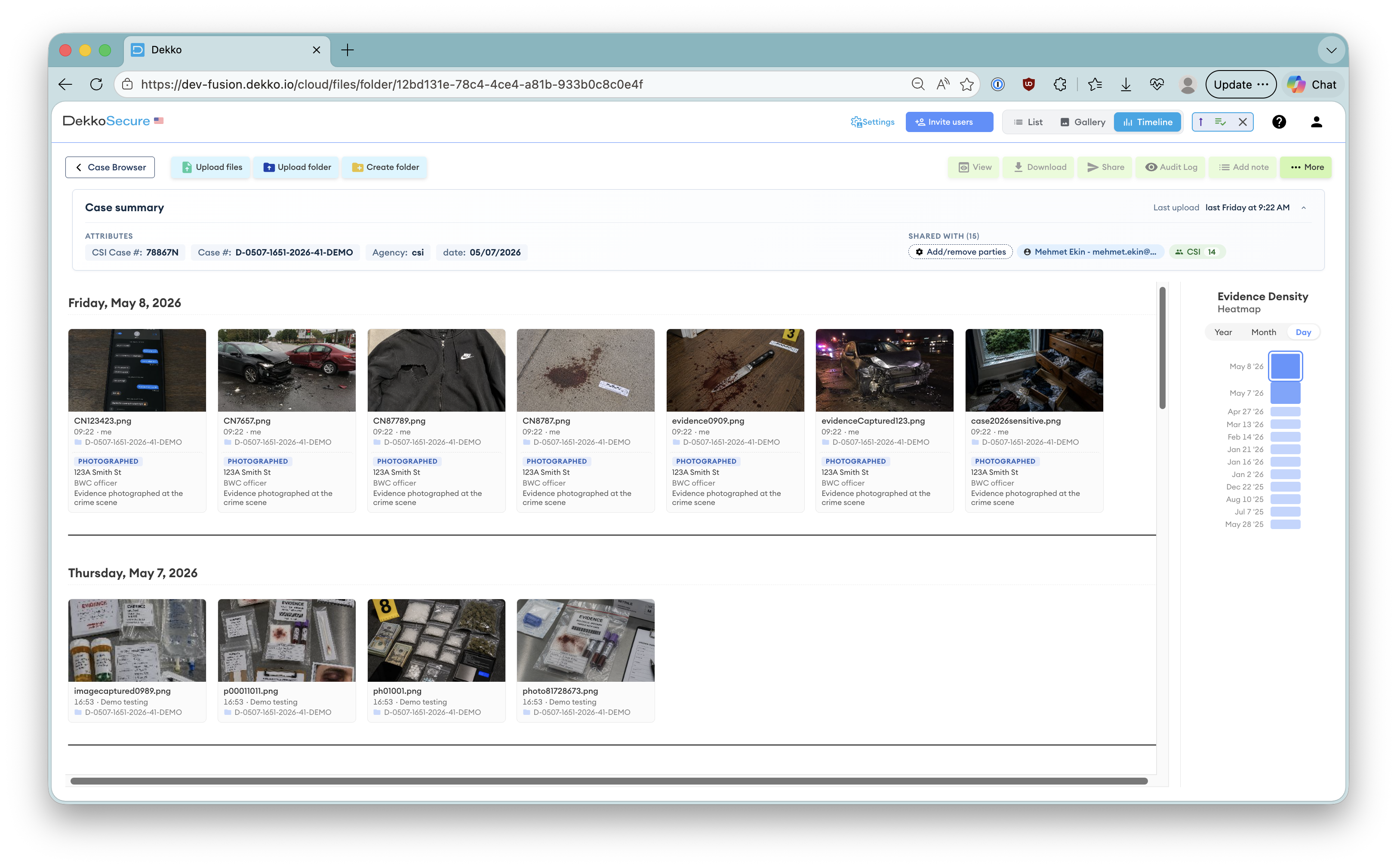

Practical implementation means this: technology providers and cloud vendors cannot access uploaded evidence such as body camera footage, case files, and investigative materials. Files stay encrypted throughout their lifecycle: at rest, in transit, and critically, during active processing. That "encrypted at work" component is the most frequently overlooked security element, and it is perhaps the most important.

-

Zero-knowledge architecture Technology providers and cloud vendors cannot access uploaded evidence. Access is restricted to authorized, authenticated users who control cryptographic keys.

-

End-to-end encryption including at work Files stay encrypted at rest, in transit, and during active processing. Decryption occurs only under controlled conditions with persistent audit trails.

-

Cryptographic digital signatures Digital signatures generate unique cryptographic hashes that detect any unauthorized modifications, creating an immutable record that definitively answers the authenticity question.

-

AI analysis on file copies only Zero-knowledge architecture guarantees source file chain of custody preservation. AI tools analyze file copies, ensuring original integrity remains intact throughout the process.

Building Evidence Systems for the AI Era

The US Advisory Committee on Evidence Rules is addressing these challenges through regulatory updates. In November 2024, the committee considered proposed Rule 707, which would apply expert-witness reliability standards to machine-generated evidence. Louisiana has already enacted the nation's first state-level AI evidence verification framework.

Proposed Rule 707 applies exclusively to evidence that proponents acknowledge as AI-created, not to evidence with disputed authenticity. It offers limited protection against deepfakes and falsified evidence introduced under contested circumstances. Regulatory development timelines make passive waiting untenable as a risk management strategy.

Organizations managing sensitive investigations need verification capabilities integrated into evidence systems during initial architecture development, not retrofitted as supplementary security layers. Effective solutions demand cryptographic verification that establishes integrity without creating additional attack surfaces or vulnerability vectors.

The evidence integrity crisis is not an emerging future risk. It is current operational reality. Law enforcement agencies relying on legacy chain-of-custody protocols designed for pre-AI operational contexts maintain substantial organizational vulnerability.

The technology to address this exists. Zero-knowledge architecture, end-to-end encryption, and cryptographic digital signatures are not experimental concepts. They are proven approaches that can restore the evidentiary integrity we need.

The question is whether we will implement them before the integrity crisis deepens, or after more cases are dismissed because we could not prove what was real.

Chief Executive Officer, DekkoSecure

Jacqui Nelson is the CEO of DekkoSecure, an Australian cybersecurity company specializing in zero-knowledge secure file sharing, digital evidence management, and secure collaboration for government, law enforcement, defense, and critical infrastructure sectors.